Project Overview

This project demonstrates how deep learning models can automatically interpret complex urban street environments using pixel-level semantic segmentation. Each pixel in an image is classified into meaningful categories such as roads, vehicles, pedestrians, buildings, and vegetation.

The system is designed to perform reliably even with limited data and constrained computational resources, making it suitable for startups, mobility companies, and public-sector organizations.

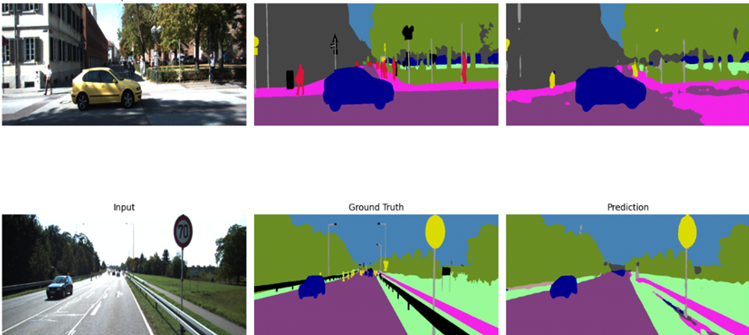

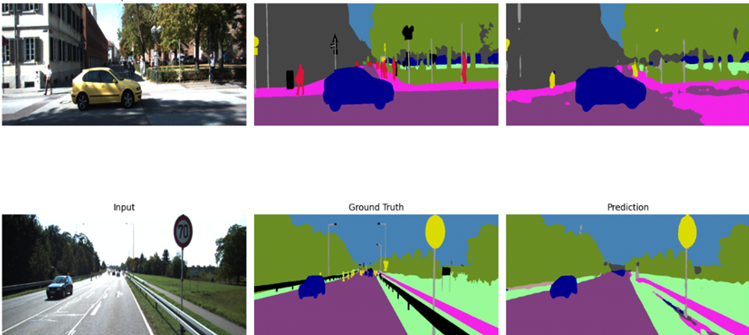

Project Results & Visual Output

Below are sample outputs from the semantic segmentation model, showcasing accurate pixel-level classification of urban street scenes.

Semantic segmentation output of urban street scene.

Semantic segmentation output of urban street scene.

Problem Statement

Many organizations working with traffic analytics, autonomous systems, and smart-city applications struggle to extract structured insights from raw street-view imagery. Manual annotation is expensive, and many AI solutions require large datasets and costly infrastructure.

Project Goal

To develop a cost-efficient and scalable AI system that delivers accurate street scene understanding without relying on enterprise-level data collection or hardware resources.

Key Features & Achievements

- Implemented multiple deep learning models for pixel-level street scene segmentation

- Optimized models to balance accuracy and inference speed

- Applied transfer learning to reduce training cost and improve performance

- Designed the system for deployment on cloud platforms and edge hardware

- Achieved approximately 95% pixel-level accuracy on urban scenes

Technologies Used

AI / Machine Learning

- Semantic Segmentation

- Transfer Learning

Frameworks

- PyTorch

- Torchvision

Models

- DeepLabv3

- MobileNetV3 (LRASPP)

Dataset & Hardware

- Cityscapes Dataset

- GPU-based Cloud Training

Use Cases

- Autonomous driving and ADAS systems

- Smart-city analytics and urban planning

- Traffic monitoring and optimization

- Infrastructure and urban development analysis

- Robotics and real-world computer vision applications

What This Project Demonstrates

This project showcases the ability to build production-ready AI systems for computer vision applications. It highlights expertise in designing models optimized for accuracy, performance, and cost efficiency.